Core Analysis of UpScrolled’s Digital Moderation Challenges

UpScrolled, a social media platform that surged to over 2.5 million users following a significant ownership shift, has hit a major roadblock in its journey: the effective moderation of harmful content. Reports indicate that the platform has been unable to sufficiently address issues related to hate speech and racial slurs, raising eyebrows among both users and industry analysts.

The Anti-Defamation League has flagged UpScrolled as a platform where extremist content flourishes, which could severely undermine its credibility. In a time when user trust is paramount, UpScrolled’s struggle to manage problematic usernames and hashtags is a cautionary tale for emerging platforms. According to a recent report by the Digital Media Association, nearly 60% of users prioritize content moderation when selecting a social media platform, emphasizing the need for companies to implement robust moderation strategies as they grow.

In a video response, UpScrolled’s founder Issam Hijazi acknowledged the challenges surrounding harmful content and committed to enhancing the platform’s moderation capabilities. While the intent to foster a respectful online community is commendable, the reality is that the platform’s current moderation tools have not kept pace with its user growth.

This scenario reflects a broader trend across the industry. Platforms like Bluesky faced similar issues in managing user-generated content as they rapidly scaled. The Social Media Research Institute recently published findings indicating that platforms experiencing rapid user growth often encounter significant challenges in enforcing community guidelines effectively, which can lead to deteriorating user trust and potential legal ramifications.

Second-Order Effects

While the immediate effects of UpScrolled’s moderation challenges may seem limited to user dissatisfaction and potential backlash, the second-order effects could be far-reaching. As users encounter unmoderated hate speech and extremist content, their trust in the platform diminishes—leading to attrition rates that could hinder future growth. Research from the Pew Research Center indicates that users are more likely to abandon platforms that they perceive as unsafe or unwelcoming, creating a vicious cycle that can stymie engagement and profitability.

Moreover, the presence of harmful content could attract regulatory scrutiny. Governments worldwide are increasingly holding social media platforms accountable for the content they host. If UpScrolled continues to struggle with moderation, it could face legal challenges that may lead to costly fines or restrictions on its operations. A report from the International Journal of Cyber Law indicates that platforms that fail to adequately address harmful content risk facing legal battles that could divert resources away from innovation and user engagement.

Additionally, the reputational damage associated with being labeled a haven for hate speech can have a long-lasting impact. Users may share their negative experiences on other platforms, creating a ripple effect that could deter new users from joining. The long-term implications of such reputational damage could stymie UpScrolled’s growth trajectory and influence other emerging platforms to prioritize moderation as a core aspect of their business strategy.

Data & Competition

The competitive landscape of social media is unforgiving, especially for platforms like UpScrolled that are navigating the complexities of content moderation. The current climate has left many questioning which platforms will emerge as winners and which will falter under the weight of their own unregulated growth.

Winners in this scenario are likely to be established platforms that have invested heavily in moderation technology and user safety measures. For instance, platforms like Facebook and Twitter have dedicated significant resources to develop advanced algorithms and human moderation teams to tackle harmful content. Their proactive approaches have allowed them to maintain user trust, even amidst controversies.

On the other hand, platforms that fail to prioritize moderation may see a decline in user engagement and retention. UpScrolled’s struggle serves as a stark reminder that the cost of neglecting moderation can be steep. A report by the Social Media Analytics Group suggests that platforms with inadequate moderation tools face user attrition rates of up to 40% within the first year of operation.

The market impact is clear: as users become more discerning in their platform choices, those that prioritize safety and community standards will likely outperform their competitors. The Digital Media Association’s findings indicate that platforms with strong community guidelines and effective moderation are not only more likely to retain users but also attract new ones through positive word-of-mouth.

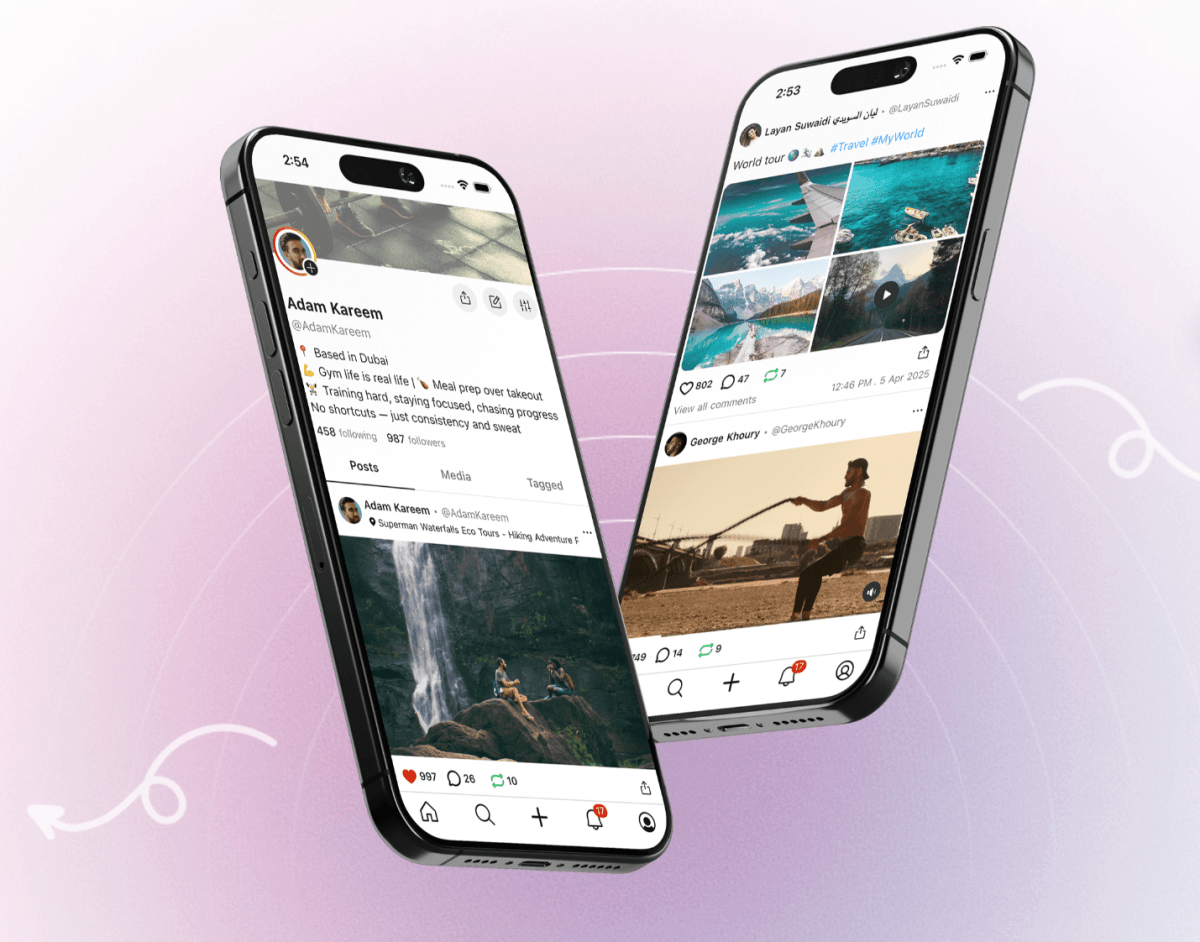

Why this visual matters: This visual highlights the critical intersection between digital moderation strategies and social media growth. Understanding the dynamics of digital moderation is vital for platforms aiming to foster safe and engaging online communities.

Frequently Asked Questions

What are the main challenges facing UpScrolled?

UpScrolled is currently grappling with the effective moderation of harmful content, particularly hate speech and racial slurs, amidst its rapid user growth. This has raised concerns among users and industry analysts regarding the platform’s ability to maintain a safe online environment.

How does content moderation impact user trust?

Content moderation plays a crucial role in user trust. Platforms that fail to adequately address harmful content risk alienating users, leading to increased attrition rates and a damaged reputation. Users are more likely to abandon platforms perceived as unsafe or unwelcoming.

What are the potential legal ramifications for platforms with poor moderation?

Platforms that struggle with moderation may face regulatory scrutiny and legal challenges, which can result in costly fines or operational restrictions. This can divert resources away from innovation and user engagement, further stymieing growth.

How can emerging platforms ensure effective moderation?

Emerging platforms can prioritize robust moderation strategies by investing in advanced technology, hiring dedicated moderation teams, and developing clear community guidelines. This proactive approach can help foster a safe and engaging user experience, ultimately supporting long-term growth.

Meet the Analyst

Marcus Vance, Tech Editor – With over a decade of experience in digital media analysis, Marcus specializes in exploring the intersection of technology and user engagement. His insights provide valuable perspectives for entrepreneurs navigating the evolving landscape of social media.

Last Updated: March 2026 | HustleBotics Editorial Team